Not always writing test scripts goes as smoothly as planned. Sometimes even seemingly easy tests take way too long to make them right. Especially when just starting to write tests there are a ton of potential issues that can pop up. These issues can be in the website itself and also in the written test script. Debugging is one of the most important skills any automation tester should learn. There are many ways of test script debugging. In this blog we will show you some so you can debug your automated testing scripts right now.

Local testing

One of the easiest ways to validate your script is by running it locally on your own machine. In case the automated tests are running on remote devices or on a cloud platform like Loadero, extra visual validation and manual interference could give a hint where the issue might lay. It is quite important that the script runs in the same environment as the remote device, so there are no errors due to inconsistencies in the configuration. Make sure to pay attention to this when you start your test script debugging with local testing. Our beginner’s guide to test automation with Nightwatch.js explains local set up and launching tests, take a look if you plan to test locally.

In case you want to try this method, here are the links where to get both of the frameworks that we support in Loadero:

Verbose Selenium logging

If verbose logging is enabled, then more action by the framework will be logged. These messages sometimes contain API calls and their responses (such as for Nightwatch). From their responses it’s possible to determine which element was manipulated or found. For example, such logs could indicate that a browser alert was triggered or an element is not available. In the other side TestUI with enabled verbose logging will log every action done in the test, but without enabling it – only test status will be logged at the test end.

The only downside for enabling verbose logging is that it will clutter up the logs and make them unintelligible.

This can help with test script debugging a lot, and in Loadero all performance test participants have access to verbose Selenium logs in the performance test mode. Check out our Wiki page to get more information about test modes!

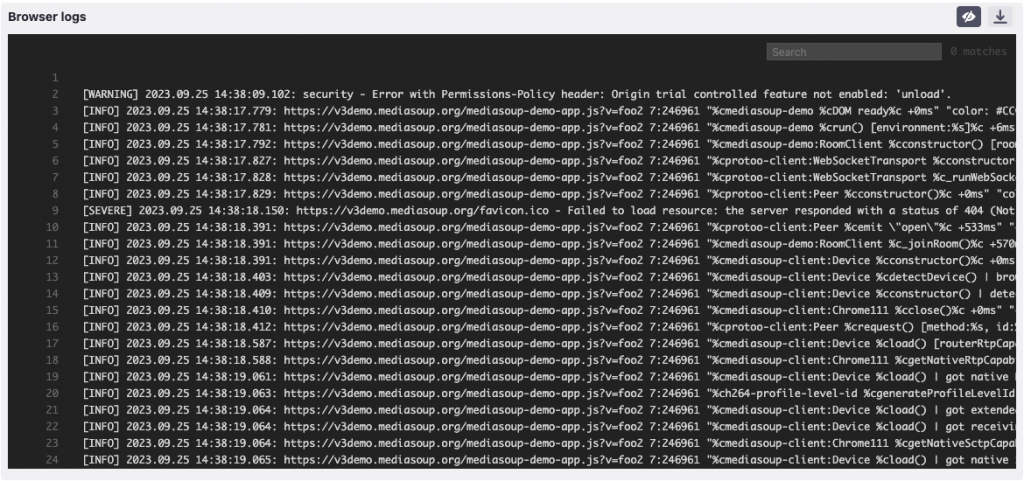

Console logs

In case of test failure browser console logs have to be always checked. Most of the time they are empty or contain just a couple of warnings. But if the website has thrown any errors during test execution – console logs is where you will find them. Those errors are on the website side and can be caused by a million reasons. But during automated tests they usually occur on button clicks, because that is an action that triggers extra functionality on the website’s side.

Session recording

This is a way of test script debugging that we are proud to offer to our service users. Loadero has a test mode that will video record the whole test duration. So it’s possible to visually validate the test actions and make sure no unexpected elements or alerts appear. By using session recording it’s easier to detect problems with changing UI, for example unexpected redirects. Such issues potentially can be easily missed in logs. In case of a page redirect there could be no logs about it and the engineer is left in the dark about what caused the test to fail.

In addition session recordings can be saved and used later for investigation of what caused the issues and search for visual improvements, for example in group call video quality. This recording could give an indication of UI usability. Recording a session with network conditions set can also give insights on application behavior, when a user has worser connection. Learn more about testing with different network conditions from this blog post. User experience is very important, after all, the visual functionality is the first thing that the user encounters.

Recording a video has impact on the machine resources, so when using session recording keep in mind that the system could possibly be slower to compensate for the extra load, especially if the website that is being tested itself is very resource intensive.

Screenshots

Without a doubt, the easiest and quickest way to debug your automated testing script is using screenshots. Both TestUI and Nightwatch support taking screenshots during script execution. When using Loadero these screenshots can be taken using our custom commands. If you already have some tests in Loadero or just planning to create some, make sure to add the commands to take screenshots. Our Wiki explains how to achieve that in NightwatchJS. After test execution the screenshots can be found in participant results view under Artifacts tab. More on screenshots and Loadero test results view is explained in this blog post about results reports.

In the real world, there is no downside for using screenshots. They don’t require a lot of machine resources and do not interfere with the test itself. Matter of fact, we would recommend at least creating screenshots at the main possible problematic points. That can possibly save time and costs for relaunching the test.

Check the scenario manually

While writing tests we strongly recommend opening the website using a fresh incognito or private tab. This helps to avoid all possibilities for previously set settings and cache. The simplest things like cookie banners or pop-ups are often forgotten. But they are usually shown for new visitors and such elements could be in the way of the website’s UI. Also things like captcha nowadays tend to appear more for new visitors. If this is the case, sadly there is not out of the box way to bypass it.

There are many more techniques for your test script debugging, we explained just six to help you start. Some of the described approaches can be used not only for debugging purposes. Session recordings and screenshots are also very useful in performance testing as well. Sign up for our free trial and run multiple performance tests free of charge. Use the debugging techniques to prepare your tests for future large scale testing. If you will require any assistance during exploration, make sure to contact our helpful support team at support@loadero.com.