We have already wrote previously about how you can automate your testing routines without using the graphical interface but by using Loadero API instead. In this blog post we will show how you can integrate performance and load tests into your CI/CD workflows with the help of Github Actions. Github Actions allow you to automate and execute your development workflows directly from your repository which makes the integration very simple. We will take a look at how you can create a workflow and an action in Github, and will also test the integration with Loadero by launching a test run with Github actions.

Prerequisites

- Access to Loadero’s API

- It is recommended to read Integrating Tests To Your Development Pipeline. Quickstart Guide To Loadero API.

- Familiarity with Javascript and it’s ecosystem

- Basic knowledge of CI/CD practices

Let’s imagine that multiple contributors are working on a product, and our task is to ensure that no regressions are occurring after the new functionality is being added. To maintain the quality of the product, we could run UI automation or load tests every time new code is being approved and merged. To accomplish this task, we will use Loadero as it suits our needs perfectly for this case.

Our action plan will consist of 3 steps:

- Create a workflow that will run every time the new code is being merged into the main branch

- Create custom Action that will be used in a workflow to execute Loadero tests

- Test the integration

Workflow

To begin with, let’s create a workflow that will run when a new pull request gets approved and merged to the main branch of our repository.

A workflow is a configurable automated process that consists of one or multiple steps. The workflow is defined as a YAML file.

In the root of our project let’s create a hidden directory .github and inside this directory, another directory called workflows. Inside workflows let’s create a YAML file ci-workflow.yml.

This configuration file will set the following:

- name of workflow

- when this workflow should be run

- condition that should be satisfied before jobs can start running

- what jobs it should run

- what steps the job should go through and its environment

- what custom action it should use

on:

pull_request:

branches:

- master

types: [closed]

jobs:

loadero-test:

if: github.event.pull_request.merged == true

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- uses: ./

Action

In the next step, let’s create a custom action that will execute the code needed to interact with Loadero via the API.

Actions are standalone commands that are combined into steps to create a job your workflow uses. You can create actions yourself or get them from the Github community.

To create a custom action, in the root of the project, we need to create a metadata YAML file called action.yml with the following code:

name: "Loadero test" description: "Runs Loadero test" author: "Loadero" runs: using: "node12" main: "loadero-action/dist/index.js"

In this metadata file you can define inputs, outputs, the entry point for our action and what environment it should use. We don’t need to define any inputs or outputs for our example, so we just add some basic metadata, set the environment and the entrypoint for our action.

After this file has been created, in the root directory, we need to create a directory called loadero-action. In this directory we’re going to store all action-related files and it will act as a standalone NPM project. Inside this directory, we want to create a dist directory where our compiled action file will be put later.

Now, let’s create our custom action which will execute the code we need to interact with Loadero.

In loadero-action directory, we will create a file called action.js. This action will be responsible for running the test in Loadero and polling results every 5 seconds until the test is finished or aborted. The dummy Loadero test we’re going to use is not relevant to our imaginary project and is used for the sake of simplicity of this guide. In reality, with Loadero you could run a load test or a UI automation on a project you’re working on to ensure that the quality of your service has not been degraded after new codebase changes.

Let’s get back to our Action. Before writing any code we need to install two NPM packages: node-fetch for working with async requests and @actions/core – the core GitHub Actions functions for stuff like logging outputs and terminating jobs in a workflow.

The actions.js file should have the following code:

import * as core from "@actions/core";

import fetch from "node-fetch";

// Loadero API base URL

const BASE_URL = "https://api.loadero.com/v2/projects";

// The ID of the project we are working with

const PROJECT_ID =<Your project ID>;

// The ID of the test we want to run

const TEST_ID =<Your test ID>;

// Request options with authorization header

const OPTIONS = {

headers: {

Authorization:

"LoaderoAuth <Your access token>",

},

};

const startTest = async () => {

const url =` ${BASE_URL}/${PROJECT_ID}/tests/${TEST_ID}/runs/`;

const response = await fetch(url, { ...OPTIONS, method: "POST" });

return response.json();

};

const checkTestStatus = async (testRun) => {

let timeout;

core.info("Checking status of test ...");

try {

const url = `${BASE_URL}/${PROJECT_ID}/runs/?filter_active=true`;

const response = await fetch(url, { ...OPTIONS, method: "GET" });

const data = await response.json();

const runningTest = data.results.find(test => test.id === testRun)

if (!runningTest) {

core.setFailed("Test failed", data.results.length);

return;

}

if (runningTest.success_rate === 1) {

core.notice("Test success");

return;

}

timeout = setTimeout(() => checkTestStatus(testRun), 5000);

} catch (error) {

core.setFailed("Something went wrong");

clearTimeout(timeout);

}

};

// Run test

startTest()

.then(test => {

checkTestStatus(test.id);

})

.catch((error) => {

core.setFailed("Test failed", error);

});

Let’s quickly go through the code and see what it does:

- First we import everything from

@actions/corepackage andfetchfrom node-fetch for later use. - Then, we create constants to work with Loadero API (read more about how to use Loadero API here)

- Next, we define an async function called

startTestwhich will fire a POST request to run a Loadero test. This function will return a promise that will be resolved with the test id returned from the POST request. - Then, we define another async function called

checkTestStatuswhich will accept the test ID of the running test as an argument, and will be called recursively every 5 seconds to poll the status of the running test. There will be three base cases when this function will return without making a recursive call to stop the execution:

- The API doesn’t return any results for the running test, meaning the test has failed or aborted

- The result object has a

success_ratekey with the value of 1, meaning the test has passed - An error was thrown during the request execution, meaning that there was some sort of network error.

If the test fails, we want to terminate the execution of our Action and mark the step in the workflow as failed. For this we use the setFailed method from @actions/core package. In order to log some messages while our workflow is running we can use the notice method from the same package.

With our functions defined we can finally conclude our Action by starting the Loadero test and polling its status while the test is running. In order to do this, we call the startTest function and use a promise-chained construction to call the checkTestStatus function.

The last step of creating a custom action is to make the action.js file runnable, meaning that we want all its dependencies to be compiled into a single file together with the action’s source code. To help with this we need to install another npm package called @vercel/ncc. The ncc package basically compiles the entire Node.js project into a single file. With this package installed we need to define a build script in the package.json file to output the compiled file to our previously created dist directory. The package.json file should be configured like this:

{

"name": "loadero-action",

"version": "1.0.0",

"description": "Runs Loadero test",

"main": "index.js",

"scripts": {

"build": "ncc build action.js -o dist"

},

"author": "Loadero",

"license": "ISC",

"dependencies": {

"@actions/core": "1.5.0",

"@vercel/ncc": "0.30.0",

"node-fetch": "3.0.0"

}

}

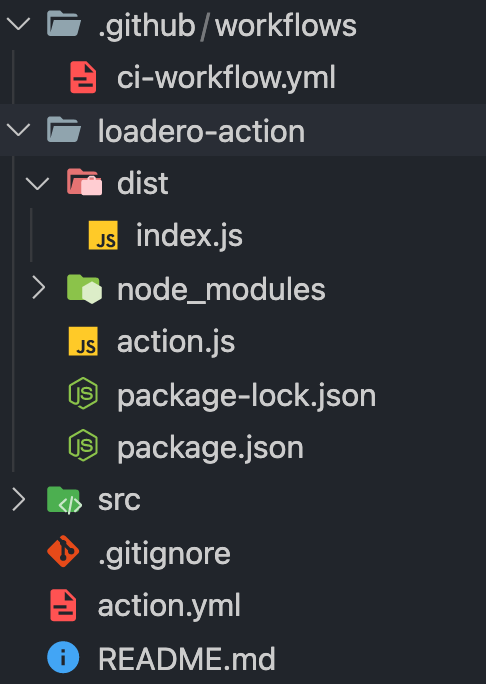

With all files created our project structure should look like this:

Test the integration

That’s it! Our workflow is ready to be tested. But, before we can test it, we want to bundle up our custom action into a single file by running npm run build inside loadero-action directory. After our action has been compiled we need to commit local changes and push them to Github.

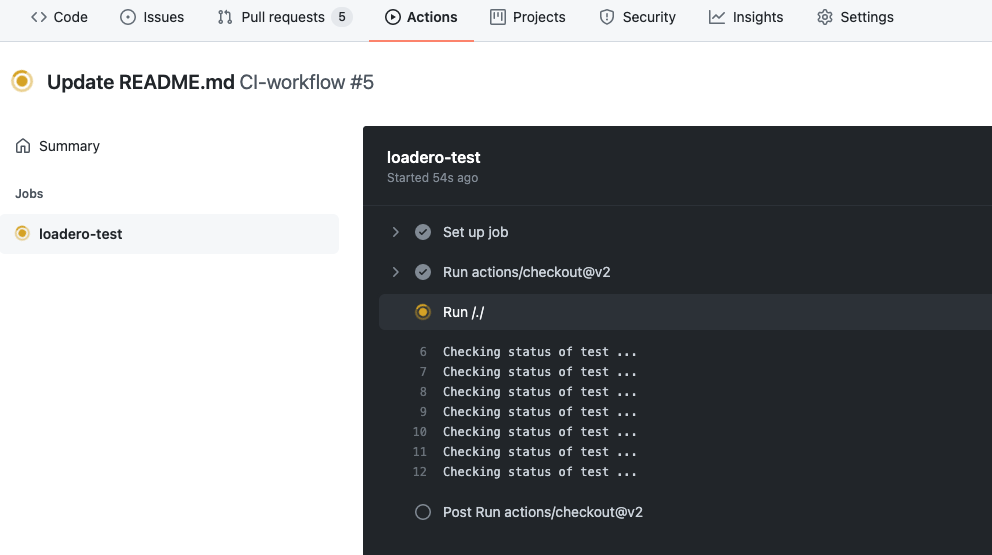

To test the Loadero integration, we can simply create and approve a new pull request by changing the ReadMe file contents of our repository right in the Github web interface. Approving and merging PR will result in executing the workflow we have created earlier. If we navigate to the Actions tab, we will see that the workflow has started running.

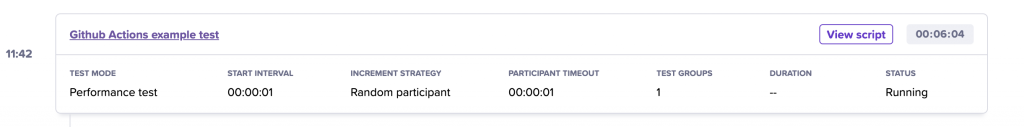

Now, if we go to the Loadero web interface and check test results for our project, there we’ll see that our Loadero test has started running.

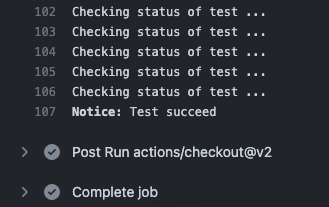

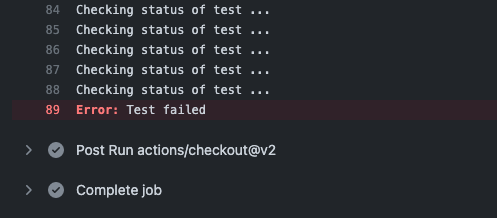

Then, if we go back to GitHub and click on our workflow, we will see that our loadero-test job is running and the “checking status of test” message is being logged every 5 seconds, just like we defined it in our Action code.

Once the Loadero test is finished running and is successful, we will get our workflow marked as green. Since we don’t have any other jobs defined, our whole workflow will be marked as successful.

If the test had failed or was aborted, then the workflow would have been terminated and marked red.

This is it! You have successfully run and tested your Loadero integration using a Github Actions workflow!

Summary

Today you’ve seen how to integrate performance tests into your CI/CD pipelines using Github Actions. A similar approach can be used for other integrations too. We have created a workflow, a custom action to set up the integration. Of course, in a real world scenario you would add other steps to your workflow such as unit testing, linting and other stuff that would suit your needs. Start saving your team’s time by such integrations and you will be able to focus on other important tasks, while tests will be launched automatically.