Welcome back to Loadero’s monthly newsletter and a happy new year to you all! Last month we implemented several fixes, as well some brand new functionality – all of which will be gone over in this article.

Warning: To increase computational capacity, Loadero is currently working on implementing expiration for logs/artifacts. This also doubles as a data protection measure, since logs and artifacts will no longer be available permanently but will be deleted from Loadero’s systems a certain amount of time after the run’s conclusion. Once this change goes live you will have a limited amount of time depending on your payment plan to collect logs and artifacts from your past runs that you may want to reference in the future. We are letting you know of this feature ahead of time, so that you can get a headstart at archiving any information you deem important. Keep in mind that when logs expire, machine charts and WebRTC charts for that test’s report will no longer be available either.

New compute units

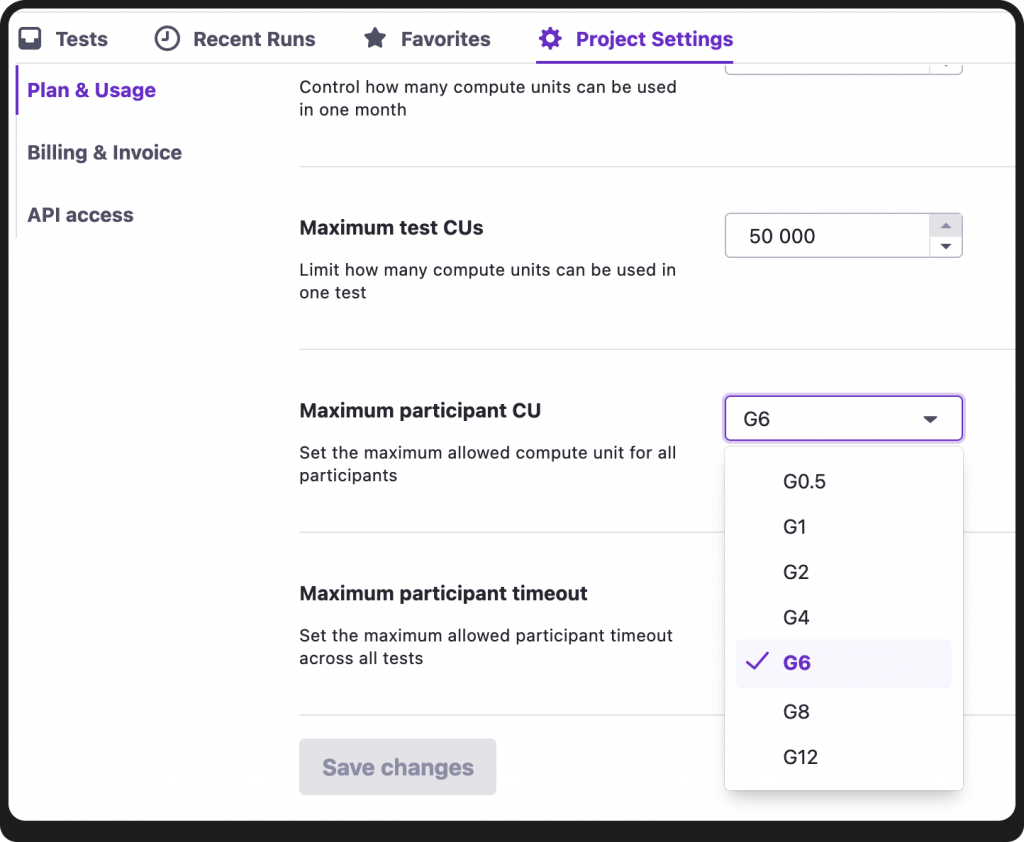

Up until recently the highest compute unit you could assign to a participant was G6, corresponding to 3 CPU cores and 6 GB of RAM. We were notified that some users were testing applications that were demanding enough that even G6 was struggling to keep up. As such, we have introduced two new compute unit values – G8 (4 CPU cores; 8 GB of RAM) and G12 (6 CPU cores; 12 GB of RAM).

These new compute units will only be available for projects with an Ultimate or Enterprise plan. If you have an Ultimate plan already but are still unable to allocate G8 or G12 to your participants, make sure to check your subscription settings for what your maximum compute unit is in the project.

When creating/editing participants you may notice that the order of available compute unit options is a bit awkward. We are aware of this and a fix is in the works.

E-model MOS

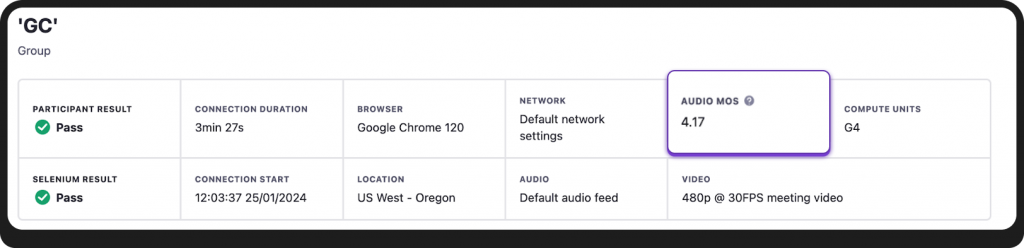

Users are now able to evaluate call quality via E-model MOS in their WebRTC calls. This enables a quick and simple way for users to gauge the audio quality of the call that took place during the test. MOS (Mean Opinion Score) is graded on a scale of 1 to 5, where 1 indicates incredibly poor call quality, whereas 5 indicates perfect quality. The calculations are done automatically and you can see the value in each participant’s report, in the Audio MOS field.

Additionally, you will be able to create asserts for this value by asserting mos/audio/e-model/avg.

Note: E-model MOS will only be calculated for participants with Google Chrome as their browser. Sadly, Mozilla Firefox does not provide all the WebRTC metrics necessary to enable this MOS calculation algorithm.

Raised participant limit for performance tests

Each of Loadero’s test modes comes with its benefits and drawbacks. There is a balance between the performance test and load test modes – load tests offer the ability to run far more participants, however, performance tests offer client-side machine metrics, far more detailed Selenium logs, and the ability to access files downloaded during the test in the results report. In an effort to ensure that users do not have to give up the privileges of performance test mode too early, we have raised the participant limit in performance tests from 50 to 200. If your project has tests with no more than 200 participants that are set to use load test mode, we suggest switching these tests to use performance test mode instead.

Tests list overhaul

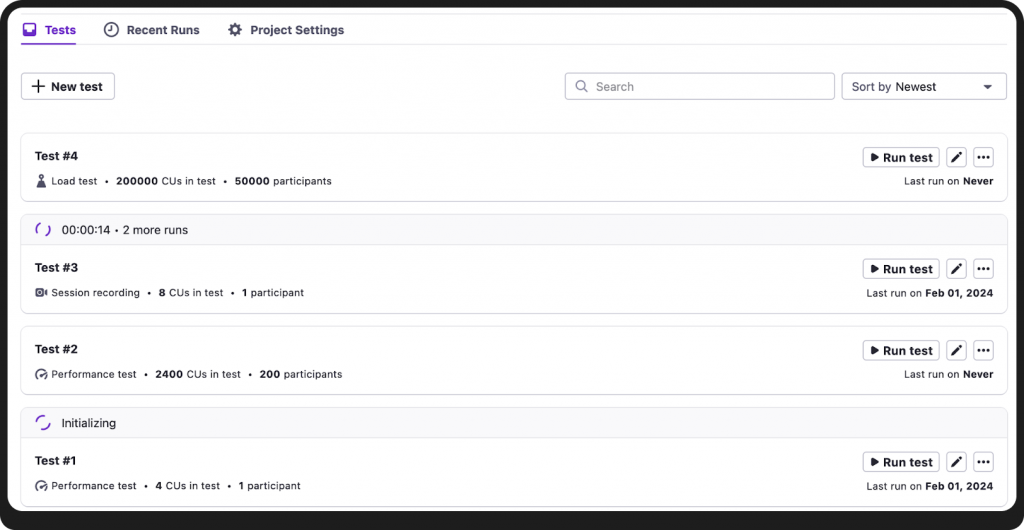

If you are reading this article, it is quite likely that you are already aware of this change – the tests list has undergone a complete overhaul, both visual and functional.

A rundown of the functional changes:

- You can now launch multiple runs of the same test by just clicking “Run test” repeatedly.

- Aborting a run from the tests list is no longer possible, this will have to be done from the “Recent runs” tab, choosing which run in particular you want to stop.

- Each test now shows when its last run date was.

- The tests list is now sortable. You can use three sorting strategies – newest, last updated, and last run date.

Various fixes

Stability: Adjusted some logic which will significantly reduce the possibility that a user will encounter AWS errors. Additionally, in the past month there was a period of time where Loadero had migrated to using Chrome for Testing browsers instead of regular Google Chrome. However, it was discovered that these Chrome for Testing browsers were worsening the performance metrics of participants, indicating that they weren’t quite an accurate portrayal of what a real user might experience. As such we reverted back to regular Google Chrome browsers.

Documentation: Improved API Swagger documentation. You can find more information about our API here.

User Experience: Several small changes to the UI to give it a more intuitive and consistent feel.

Support for new browser versions

Lastly, we have added support for:

- Google Chrome 121;

- Mozilla Firefox 122.

That is all for what changed within the past month! We hope to give you an update on more changes in the beginning of March.